While looking into the difference of Online safety for LGBTQ+ vs. straight persons we did a deep dive into Dark Patterns and decided to do a separate article about this topic. Why? Because amazingly – or rather scarily – even some respectable and legitimate companies apply these tricks to get users to share or do more than they would want.

From Difficult-to-cancel subscriptions, buried terms, disguised ads, to tricks to obtain data you are not willing to freely share: there are many creative Dark Patterns tricking you daily.

Awareness about Dark Patterns is (finally) rising, even in the regulatory field – it took long enough, considering the term was first introduced by Harry Brignull in 2010. Many governments have started either looking into the topic and think about legislation or are already trying to formulate a legislature.

Dark patterns are frustrating enough for users that according hashtags have come up, like #darkpattern and @darkpatterns on Twitter and a more explicit one on Reddit: assholedesign.

But while dark patterns are annoying, it is important to understand the danger they can pose to you, your finances and your personal data, to recognize them and to know how to avoid or circle around them.

Who would have thought some of these trustworthy companies resort to dark patterns?

Source: “Hall of Shame” by Deceptive Design

Penalties & Fines paid by what are (perceived as) trustworthy companies:

Microsoft was fined for installing non-essential cookies without valid consent and making refusal of cookies harder than accepting them by placing them on a second layer. (2022)

Google LLC and Google Ireland Limited required users to go through several steps to refuse cookies, and for not providing a “refuse all” button in the first layer of the cookie notice. (2021)

Epic (Fortnite) was fined for violating children’s privacy (collecting voice and text data from players under the age of 13 without parental consent) and engaging in dark pattern deception to manipulate users into making unwanted in-game purchases. (2022)

Source: Deceptive Design

Feeling tricked during your Online experience? If not yet, we will see at the end of this article 😉. In any case, you are not being paranoid if you do in fact feel tricked.

Several recent studies analyzing the prevalence of dark patterns have shown that they are by far more widespread than one might think:

Mathur et al. found 800 dark patterns on shopping websites

Utz et al. analyzed the cookie banner notices of a random sample of 1,000 of the most popular websites in the EU, finding that over 50% of the banners contained at least one dark pattern

Di Geronimo et al. focused on Android apps and found that more than 95% of the 200 most popular apps contain at least one dark pattern

Nouwens et al. examined 680 of the most popular consent management platforms – services that help websites manage user privacy consent – on the top 10,000 websites in the U.K., finding that only 11.8% of websites do not contain any dark patterns

Soe et al. manually collected and analyzed 300 data collection consent notices from Scandinavian and English news websites. The authors found that nearly every notice (297) contained at least one dark pattern.

It is a very thin line between ethical design and dark patterns. The Ad Watchers have dedicated a podcast episode to this tricky topic:

Dark Patterns: Definition

A Dark Pattern (aka Deceptive Design) is a user interface that has been carefully crafted to trick users into doing things. It describes instances where designers use their knowledge of human behavior (e.g., psychology) and the desires of end users to implement deceptive functionality that is not in the user’s best interest. There are different Dark Patterns to be aware of, as you will see in the next part, and some instances combine more than one Dark Pattern.

Dark Pattern Types

Aesthetic Manipulation

Designs crafted to focus user attention on a specific thing with the goal of convincing or distracting.

Disguised Ads – Ads that are disguised as other kinds of content or even navigation, in order to get you to click. If advertisements are formatted to falsely appear to be unbiased reviews, written by independent journalists, present as neutral and unbiased ranking list or comparison-shopping instead of being based on advertising dollars.

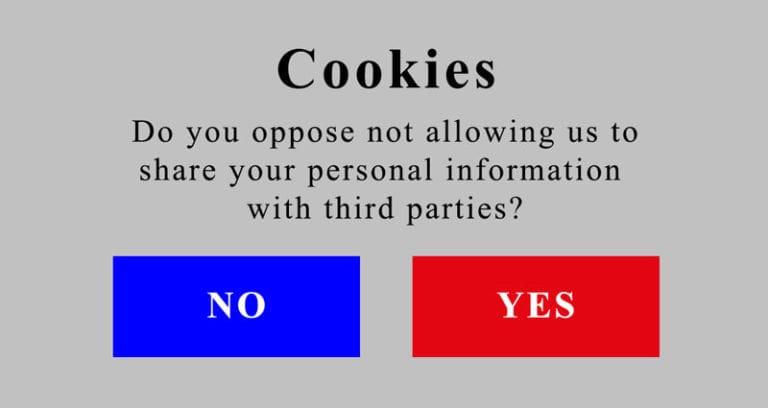

Trick Questions – Questions designed to appear to ask one thing, but when read carefully asking another thing entirely. The goal is to trick you into giving an answer you did not intend to give.

Toying with Emotion – Persuade a user into an action by targeted use of color, language or style. A good example of Toying with Emotion is embedded in some profile deactivation processes. Users are shown friends that “would miss them” and counter arguments are presented to whatever reason you selected for deactivating your account.

False Hierarchy – Convince a user to make a specific selection by giving one or more options on a visual or interactive interface. For example, when trying to unsubscribe from certain newsletters, the design encourages users to click a large, colorful “no, cancel” button. In order to cancel the subscription, the user must select a small, light gray and hard to read text option under the large colorful button.

Asymmetric Choice

A design that asymmetrically emphasizes one choice over another.

Preselection – when options are selected by default before any user interaction. Examples include:

- Add-on products such as an extended warranty are automatically pre-selected.

- “Accept tracking” cookies box is pre-checked.

- Site automatically shows most expensive option, instead of cheaper or free one.

Subverting Privacy – Get users to share more personal information than they want to. Examples include:

- Asking users for consent but veiling what exactly they are agreeing to share.

- State the site is collecting the information for one purpose but then using it for others or even sharing it with additional parties.

- Making it difficult for users to find and change default settings that maximize data collection.

Trick Questions – Same as described above under “Aesthetic Manipulation”.

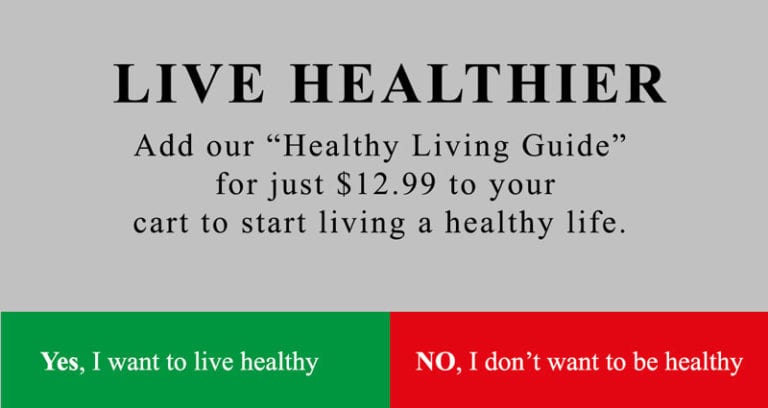

Confirmshaming

Use shaming to drive users to act, e.g., word an option to decline in a way that shames visitors into accepting.

False Scarcity/False High Demand

If a false low-stock or high-in-demand message is displayed to pressure the user to buy immediately.

False Social Proof

Making false claims about activity on a site or interest in a product by other users or even celebrities, or using customer endorsements by customers that have been compensated for the endorsement, work for the company or do not reflect a typical customer experience under the same circumstances.

Forced Action

Any situation requiring a user to perform a specific action to (continue) access a functionality.

Friend Spam (Social Pyramid/Address Book Leeching)

When a product asks for your email or social media permissions under the pretense of a desirable outcome (e.g., finding friends), but then spams all your contacts in a message that claims to be from you. Pressuring to invite your friends is also a form of Friend Spam.

Gamification

When a service or aspects of it can only be “earned”. Mobile games occasionally give players levels that are impossible to complete, trying to force the user into purchasing extra lives or power ups.

Hidden Information

Not making options or actions relevant to the user readily accessible or hiding material information/significant product limitations, etc. This Dark Pattern attempts to disguise relevant information as irrelevant, manifest options/content hidden in fine print, behind not clearly named hyperlinks, behind pop-ups that only appear if you happen to hover over the right thing on the page or even discolored (and hard to read) text. A good example is coloring an unsubscribe link the same as the background to make it more difficult to see and hence more difficult to unsubscribe.

Interface Interference

The user interface is manipulated to:

- confuse,

- favor some actions over others or

- limit the discovery of possibilities of an action important to the user.

Misdirection

This is very common in software installers. For example: presents the user with a typical continuation button like “I accept these terms”, while obscuring that the consent is given to another program than the one the user intended to install. Users typically accept the terms by force of habit, allowing in this case for the unrelated program to be installed. The unrelated programs pay for each installation that the installer procures. The alternative to skip installing the unrelated program tends to be much less prominently displayed or even counter-intuitive (e.g., implies declining the terms of service).

Sometimes “Next” buttons are used on website as registration steps when asking for unnecessary personal information obscuring the fact that one could click “Next” without sharing the information. It’s basically tricking you into doing or sharing something you would not share if the optionality of it was clear to you.

Nagging

Manifests as a repeated (and very annoying) intrusion during your normal interactions. Nagging may include pop-ups obscuring the interface or any other actions that obstruct or otherwise redirect the user’s focus.

Obstruction

Making a process way more difficult than it needs to be. The intent is to dissuade the user from certain actions.

Immortal Accounts – Making it extra hard or impossible to delete an account.

Intermediate Currency – Users spend real money to purchase a virtual currency to use a service or purchase goods. Most often seen in-app purchases (video or mobile). With intermediate currencies the user loses a feel for the real dollar value spent. This can lead users to spending virtual currency differently (more generously) than they would with real money.

Prevention of Price Comparison – make it impossible for you to make an informed decision by making it hard for you to compare items and their pricing.

Roach Motel (Roadblocking Cancellation) – easy way in, difficult way out. For example: businesses that require subscribers to print and mail their opt-out or cancellation request.

Privacy Zuckering

A practice that tricks the user into sharing more information than they intended to, unknowingly or through practices that obscure/delay the option to opt out of sharing your private information.

In case you were wondering: yes, this dark pattern is indeed named after Facebook CEO Mark Zuckerberg 😉

Sneaking

Hide, disguise, or delay divulging of relevant information to the user.

Bait-and-switch – Advertising a free or greatly discounted product/service that is either unavailable or stocked in small quantities. After displaying the product’s unavailability, the page presents products of higher prices and/or lesser quality.

Another example is pop-ups with an X to close them, but instead of closing the window, something else happens.

Yet another option is to put something else where you would expect the X (close window option) to be. In that case you are looking at manipulation of muscle memory, which increases the likelihood of accidentally being triggered by the user.

Basket Sneaking – Somewhere in the purchasing journey the website sneaks an additional item into your basket. The only way to get rid of it is by an opt-out button or checkbox on a prior page.

Forced Continuity – When your credit card silently starts getting charged without any warning after a free trial, often combined with making it difficult to cancel the membership/service, you are looking at forced continuity. In other words: Silently rolling over from free to paid plans, taking advantage of the fact, that users forget to check their subscriptions and the expiration dates.

Drip Pricing – Initially advertising only part of the total price for a product and then imposing other mandatory charges later on in the buying process.

Hidden Costs – costs that were not obvious before and only come to light in the last steps of ordering.

Trick Wording

Misleading of user into taking a specific action, due to the presentation of misleading or at least confusing language.

Urgency

Includes tactics like implementing a baseless countdown timer, a false limited time or false discount claims creating pressure to buy immediately.

Example: “HURRY! SALE ENDS 00:14:23” but then the clock just goes away or it resets when it times out.

Deceptive Design has created a pattern library with the specific goal of naming and shaming deceptive user interfaces and the companies that use them. You can find some unexpected names and definitely interesting examples of deceptive design there. We found it to be a very helpful resource.

So how about now? Feeling tricked? 😊